Publications

Publications

CeADAR Thought Leadership

Beyond Compliance: Why Responsible AI Means Competitive AI

Date: April 2026

CeADAR Author: Julia Palma, EU Programme Manager

The Lessons of the "Red Flag Act"

In the world of digital transformation, we often hear that innovation or regulation is a choice. We are told that you can either “move fast and break things” or be safe and slow. At CeADAR, we believe this is a false dichotomy.

If we look back at the history of technology, we see that regulation, when done right, doesn’t act as a brake; it acts as the infrastructure that allows us to go faster.

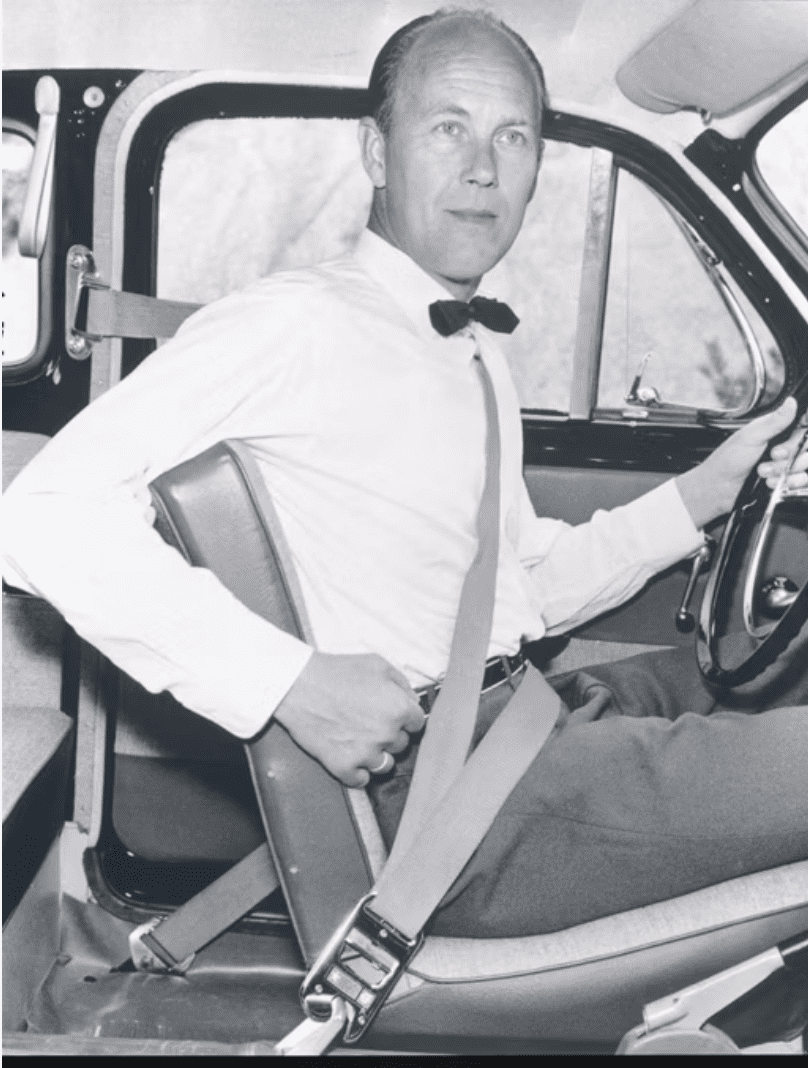

Trustworthiness as a Premium: The Volvo Strategy

In 1959, Volvo invented the three-point seatbelt. Rather than keeping the patent to themselves, they shared it for the common good. They realised that for the automotive market to scale, the public had to trust the technology. Decades later, Volvo is still synonymous with safety, a brand position that has outlasted countless market trends. On the flip side, we have seen what happens when ethics take a backseat. The “Diesel-gate” scandal showed that slipping on regulation can cause reputational and financial damage that lasts over a decade.

By 2029, the automotive industry will see new mandatory safety integrations, like Direct Vision standards to detect pedestrians and cyclists. In the IT and GovTech sectors, we are seeing a similar shift. Features that were considered “nice-to-have” ethical extras just two years ago, such as explainability and bias mitigation, are becoming the mandatory standard. If you wait until the regulation enters into force to build “trust” into your systems, you will be retrofitting a legacy engine while your competitors are already in the fast lane.

A Joint Effort: The Ecosystem of Trust

Building Responsible AI is a team sport involving the entire ecosystem. At CeADAR, we lead the Trustworthy and Ethical AI taskforce at the BDVA (Big Data Value Association), collaborating with global leaders to transform high-level principles into actionable insights. As shown in our recent report, big companies know that Responsible AI is a source of competitive advantage, not a constraint.

To achieve this, we look toward the 7 Pillars of Trustworthy AI established by the European Commission High-Level Expert Group, and help you ask the questions to dive deep into the different aspects in a practical way, and find tools to address and mitigate undesirable behaviours:

- Human agency and oversight

- Technical Robustness and Safety

- Privacy and Data governance

- Transparency

- Diversity, non-discrimination, and fairness

- Societal and environmental well-being

- Accountability

The Critical "Question 0"

Before we even reach the legal requirements of the AI Act, responsible innovation starts with a simple question: Is AI actually the best approach for this problem? Sometimes, the most responsible decision is to realise that a simpler tool might solve the issue without creating new risks or ethical dilemmas that AI might bring. To develop Responsible AI, we must first analyse the situation critically rather than defaulting to the newest tech stack. We can help you decide if a curated dashboard, statistical models or data transformation tools are the right solution to achieve your goals.

From Theory to Practice

At CeADAR, we apply these pillars to real-world industry challenges. Through projects like MANOLO, we are developing lighter, high-quality, and trustworthy AI models for the edge. Whether it is “Human-AI Teaming” on a manufacturing floor with PAL Robotics or helping MedTech companies like Bitbrain handle sensitive data securely on-device, we are proving that ethics and performance go hand-in-hand.

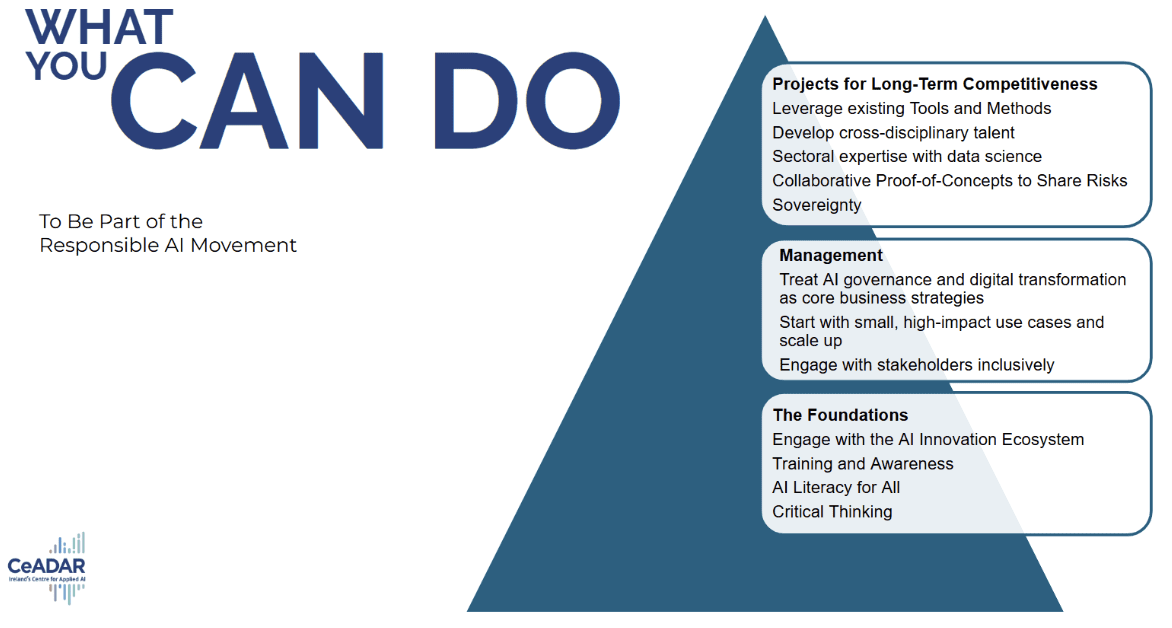

Your Roadmap to Competitive AI

If you want to transition from “compliance” to “competitiveness”, follow these simple steps:

- Build AI Literacy: Promote critical thinking across your organization to help understand the potential but also the risks, not only technical capacitation. (CeADAR offers free vendor-agnostic courses to help meet these upcoming legal obligations).

- Ask the Hard Questions: When developing systems in-house or working with vendors, interrogate their data origins and repeatability, governance, and mitigation checkpoints, and evaluate if they can help you complete the required documentation, like FRIA and DPIA.

- Governance as Strategy: Involve higher management in AI governance early. Treat AI as a business strategy, not just an IT task. Keep your stakeholders informed and listen to their concerns.

Regulation makes AI legal, but trust is what makes it profitable. Don’t build your AI systems to satisfy an auditor; build them to win the trust of your customers. The AI Act defines the road. Trustworthy AI is the vehicle that wins the race.